Playdate - QA/Release Manager

At Panic, I worked on the Playdate handheld game system, performing quality assurance and release management for the Playdate’s custom OS and SDK.

The Playdate is a playful handheld game system with a black-and-white screen and a crank, designed by the folks at Panic. It runs on its own proprietary operating system and software development kit developed in-house, not Linux or Android like many other handheld devices. My role in the team was to support new releases of the Playdate OS and SDK, making sure our updates were deployed safely to game developers and players. Deploying a bad update could, in the worst case, result in an irreversable bricking update on end user devices. So, QA on these releases was very important, despite being my being the only QA staff on the team. I supervised over 15 OS and SDK updates during my time at Panic.

The OS and SDK release deployment process included:

testing the new OS firmware on-device with multiple games and applications

validating that SDK tools, documentation, and development examples were all functioning correctly

conducting an internal beta among Panic employees, and sometimes an external beta with the Playdate gamedev community, to catch further bugs

deploying the new OS firmware to thousands of user Playdates via the firmware management web service Memfault

uploading the new SDK files to Panic’s website using internal CMS tools

announcing the OS/SDK update in the Playdate developer forums and on a fan-moderated Discord server

In addition to the release process I just outlined, one of my responsibilities when I started at Panic was to individually test each merge request completed by devs. Given that I was the only one doing this testing, and that there was a substantial backlog of merge requests to catch up with when I joined the company, manually testing every merge request became an onerous burden and a choke point in the development and release process.

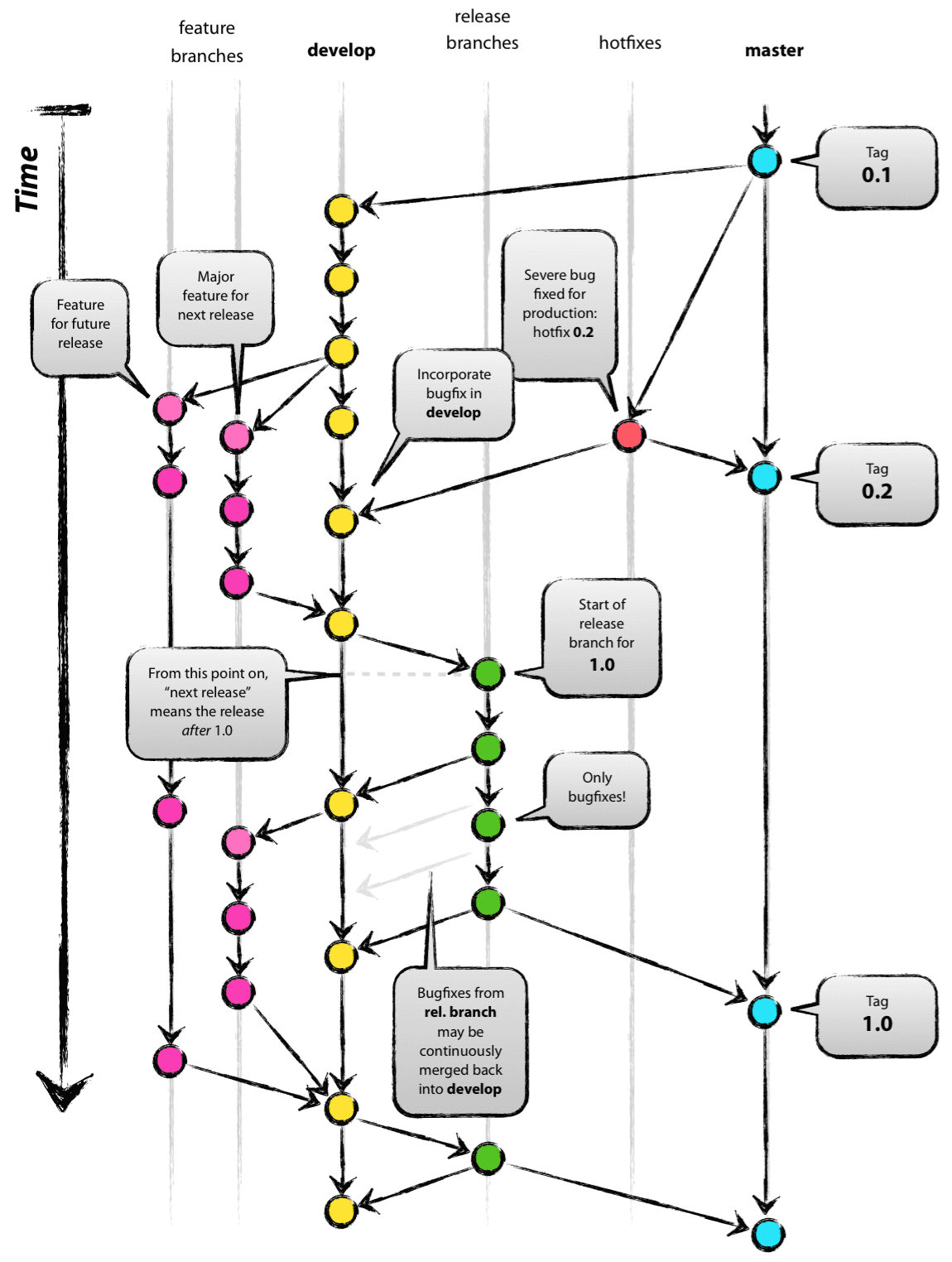

To address this, I researched alternate ways of handling merge requests. My proposed methodology was based on “git-flow”, a branching model outlined in this blog post (and shown in the above diagram), and my experience with how merge requests were handled at 343 Industries. Specifically, code quality at the MR level would be safeguarded by a three-pronged approach: 1) each developer would test their own merge requests, 2) code reviews would be conducted on each merge request, and 3) automated tests would run against each merge request. (Prongs 1 and 2 weren’t yet happening in all cases before this point.) Then I, as the QA team, would manually test the merge requests collectively once they had been compiled into a release candidate.

I presented this new plan to the development team, and after receiving and integrating feedback, we decided to adopt my strategy. The new way of doing things encouraged a more equitable share of the testing load between QA and the devs, allowing me to focus time on other aspects of my role that needed attention.